Vibemap ETL Pipeline Process

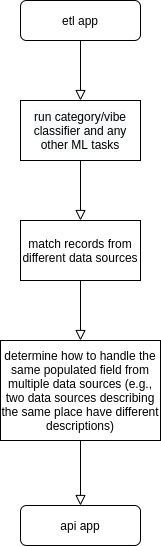

At a high level, there are two layers of data about places and events in Vibemap: the scraping and other data sourcing code that collects as much data about places and events from as many data sources as possible. This code lives in the etl Django app. The second, "higher" layer is the actual data served out of the REST API and consumed by the mobile and web apps. This is in the api Django app and at the time of this writing uses Django REST Framework (we'll likely move toward a combination of ElasticSearch and possibly some other better-performing framework). Unlike the etl app, the api app should be the single source of truth for a place or event in Vibemap, essentially combining the best, most accurate, most timely information from every record about the same place or event from the etl app. A rough process diagram of this would look something like:

This middle layer between the etl and api apps is currently a mishmash of inconsistent spaghetti code that really needs some work, preferably just rewritten from scratch.

The etl app

This Django app, in the etl folder of the project root, is where all of our core data models and scraping and machine learning code live. The most prominent libraries that we use here are:

- the Django ORM, for defining data models and interacting with the database, including the GeoDjango addition for spatial data

- scrapy, for crawling web sites

- Celery, for orchestrating tasks asynchronously

- scikit-learn and spaCy for numerous text classification and other NLP tasks

Data Models Overview

The data models live in the etl/models/ directory. Many of them correspond to the models from https://schema.org, both because they are mostly well-modeled and many websites use those models in microdata. Other models are specific to Vibemap, such as etl.models.vibes.VibeTime and etl.models.data_source.DataSource.

DataSource

This is a simple but important model that is mostly used internally for data governance. Most etl model records should have a foreign key to a DataSource record. The name field on DataSource is unique (case-insensitive), and is the primary way of identifying them.

Place

Modeled after https://schema.org/Place. This is meant to be the most generic, basic level of describing some defined physical entity on planet Earth. A restaurant is a Place, but so is a city park, or the boundaries of a street fair. Eventually, we would like to start discerning between LocalBusiness, LodgingBusiness, FoodEstablishmentBusiness, because those models have additional fields more specific to the entities they model (like price range, or cuisine for FoodEstablishmentBusiness). So, a Place could have multiple foreign keys to LocalBusiness, such as a food hall Place that has multiple FoodEstablishmentBusiness inside it.

Event

Modeled after https://schema.org/Event. Although not enforced at the database level for the etl layer (it is at the api layer), all Events should have foreign keys to Places. Before the current COVID-19 pandemic, a particular challenge was trying to filter out virtual events that did not actually occur at a physical place but which the organizers nonetheless provided precise geographic coordinates in an effort to spam their event on as many platforms as possible. Now that almost all events are virtual for the near future, we have an additional challenge of trying to determine which virtual events are being organized by organizations that would normally be holding these events at their physical places of business. An example of this in Chicago would be Cole's Bar Virtual Comedy Open Mic.

EventCategory

For storing categories about Events via a foreign key. Our categories are defined in a YAML config file in etl/config/categories.yml

EventSubcategory

Optional subcategories that have a foreign key to EventCategory. Also defined in etl/config/categories.yml

LocalBusiness

Modeled after https://schema.org/LocalBusiness. As mentioned in Place, we don't use this model much but we would like to start, because it has additional specific fields for local businesses, such as more categories (in the local_business_type field) and price_range.

FoodEstablishmentBusiness

A more specific type of LocalBusiness, modeled after https://schema.org/FoodEstablishmentBusiness. Has additional fields specific to food establishments, such as serves_cuisine and accepts_reservations_url.

LodgingBusiness

A more specific type of LocalBusiness modeled after https://schema.org/LodgingBusiness. Has additional fields specific to lodging businesses, such as more categories (in the lodging_business_type field) and checkin_time/checkout_time.

PlaceGeometry

This is a GeoDjango model containing geometry for Places. Could be points, linestrings, or polygons. This should be considered the definitive source for the geometry of a place to be displayed on a map.

PlaceCategory

For storing categories about Places via a foreign key. Our categories are defined in a YAML config file in etl/config/categories.yml

PlaceSubcategory

Optional subcategories that have a foreign key to PlaceCategory. Also defined in etl/config/categories.yml

OpeningHoursSpecification

Modeled after https://schema.org/OpeningHoursSpecification. This is a critical piece of data to have about Places/LocalBusiness, but it is also one of the most difficult to obtain. Many data sources don't have it, or they do but the format is completely unstructured (this a real example from one of our "better" data sources):

Dinner: seven days

Saturday & Sunday brunch

Open late: Saturday till 3, other nights till 2

OpenStreetMap uses a different convention, that, even if populated (most aren't), can be very out-of-date.

Foursquare uses yet another format that is easy to parse, but would cost a king's ransom to use at scale (and even they are not immune to returning out of date information).

PostalAddress

Modeled after https://schema.org/PostalAddress. This model is somewhat unique in that it does not require a foreign key to a DataSource. Addresses are messy and are really only needed for two things: displaying to the user and using as the input to a geocoding service. Also, large footprint places (or any place with multiple entrances) can have multiple addresses.

GeocoderResult

A result from a geocoding service. If a data source provides both an address and precise geographic coordinates then we typically create both PlaceGeometry and GeocoderResult from that source, in order to conserve limited usage quotas from various external geocoding services.

Review

Modeled after https://schema.org/Review

Rating

Modeled after https://schema.org/Rating

Vibe

A Vibe corresponds to an Event. We currently define a list of "acceptable" vibes in etl/config/classifiers_vibes.yml. Vibes can be scored with a numeric value so that we can weight the relative "intensity" of one vibe over another for the same Event.

VibeTime

A VibeTime corresponds to a Place. In addition to the vibe and score fields that function the same as Vibe for Events, VibeTime has additional fields, time_of_day and day_of_week (possible values are listed in etl.models.constants in TimeOfDayChoices and DayOfWeekChoices). This is because Places could have different vibes at different times of day on different days of the week.

SocialMediaAccount

A social media account associated with a Place or LocalBusiness. A challenge here is determining if an account is specific to a single physical place, or if it's a generic account for organizations with multiple locations.

Classifiers

Our category and vibe classifiers live in etl/classifiers/. These classes take some input corpus of text describing a Place or Event (usually the description, if available), but we'd also like to enhance these to allow them to incorporate other data, like Reviews or using attributes about past or upcoming Events to infer additional vibes about the Place venue for those Events, for example.

Sourcing New Data

To quickly start extracting data from a new data source, you should create a new class that subclasses etl.scrapers.base.ETLPipelineExtractor. This class sets up a lot of the scaffolding and provides hooks for overridden methods to set up all the relationships among various models in the etl app. Most of the methods in this base class are extensively documented, so you should look there for details. To see a real-world example that subclasses this base class, check out etl.scrapers.alt_weeklies.base.AltWeekly and etl.scrapers.alt_weeklies.east_bay_express.EastBayExpressExtractor

Cookbook

Here is an example of one of the highest-need tasks of the "middle" layer between multiple etl needing to be combined into one api record.

First, get the top 100 api places that have the most number of data sources (this is not actually accurate because of duplicate data_sources entries, but we'll ignore that for now):

from api.models import HotspotsPlace

from django.db.models import F, Func

api_places = HotspotsPlace.objects.annotate(dscount=Func(F('data_sources'), function='jsonb_array_length')).order_by('-dscount')[:100]

Then, try to find some of the etl records that created this one api record

from etl.models.place import Place

data_source_names = set()

for place in api_places:

for data_source in place.data_sources:

data_source_names.add(data_source['name'])

etl_places = Place.objects.filter(name__in=[x.name for x in api_places], data_source__name__in=data_source_names)

Now you can inspect all the etl records that created the single api record. Most likely, some will have some fields populated while others do not, or they might have different description fields. Determining "tie-breakers" like this and generally determining how to create a single API record from multiple ETL records is aa critical feature we need to improve